Since Blazor is now officially not-experimental (meaning it is now officially in preview) I figured I’d try to mix it in with my other favorite thing right now… Bots! (For those of you unfamiliar with Blazor, the short description is “run C# libraries in the browser!”)

So what would a bot looked like if it ran on Blazor? It would mean actually running our bot in the browser directly, instead of running it in a web service (while still having the option to move it to the server if we wanted to).

For this demonstration, I’m going to use a very simple bot – the Echo bot from the BotBuilder Samples repo. This is so we can focus on how the bot gets connected to a Blazor app and not worry about typical bot things (LUIS, conversation management, state, etc). We’ll talk the possible impact on those things in the wrap up.

To get our bot running in a Blazor client side app, we need to follow these steps

- Create an standard Blazor app

- Add the echo bot to the blazor app

- Create the Blazor bot adapter

- Update the blazor app to talk to bot adapter

Create a Blazor app

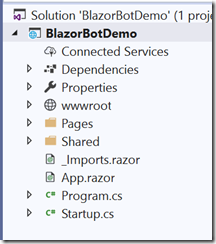

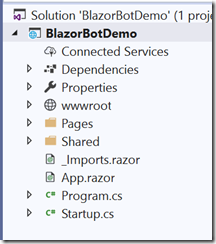

For this concept we are just going to start with the default Blazor template for Blazor (client-side). Just follow the instructions for Getting Started in the Blazor document and make sure to select the Blazor (client-side) template or the Blazor (ASP.NET Core Hosted) template when that choice comes up.

NOTE: At the time of this writing you need Visual Studio 2019 Preview to run Blazor apps (the preview that came out after the release of VS 2019)

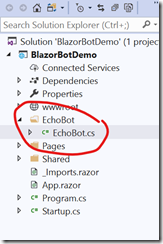

You should end up with a solution that looks something like this

Run the app. Click around and make sure it is functional. Once we verify that we have a running Blazor app its time to…

Add echo bot to the blazor app

Add the bot references

First we need to add the bot assembly references to the app. Bot framework v4 is built on .NET Standard 2.0 libraries so they will run inside Blazor (mostly). Use NuGet to add a reference to Microsoft.Bot.Builder v4.x. Now when you build the app you may get an error similar to

Cannot find declaration of exported type ‘System.Threading.Semaphore’ from the assembly ‘System.Threading, Version=4.0.12.0, Culture=neutral, PublicKeyToken=b03f5f7f11d50a3a’

This is because Blazor attempts to do a linking step at build time. This is an optimization that allows it to remove unnecessary IL from the app’s output assemblies. Unfortunately this causes a problem with the current version so you need to disable this by adding the following line to your BlazorBot.Client.csproj file’s main PropertyGroup section.

<BlazorLinkOnBuild>false</BlazorLinkOnBuild>

This will tell the build to skip the linking phase. Because we aren’t using that particular code at runtime, it wont become a problem later.

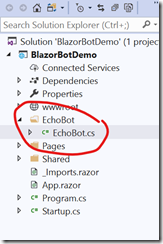

Add the bot

Create a folder in the project named “Bot” and create a new class “EchoBot” in that folder. Copy the EchoBot code from the sample https://github.com/Microsoft/BotBuilder-Samples/blob/master/samples/csharp_dotnetcore/01.console-echo/EchoBot.cs

This is a standard bot. There is nothing specific to Blazor about it. Next we need to…

Create the Blazor bot adapter

What is an adapter? from the Console Bot sample:

Adapters provide an abstraction for your bot to work with a variety of environments.

A bot is directed by it’s adapter, which can be thought of as the conductor for your bot. The adapter is responsible for directing incoming and outgoing communication, authentication, and so on. The adapter differs based on it’s environment (the adapter internally works differently locally versus on Azure) but in each instance it achieves the same goal.

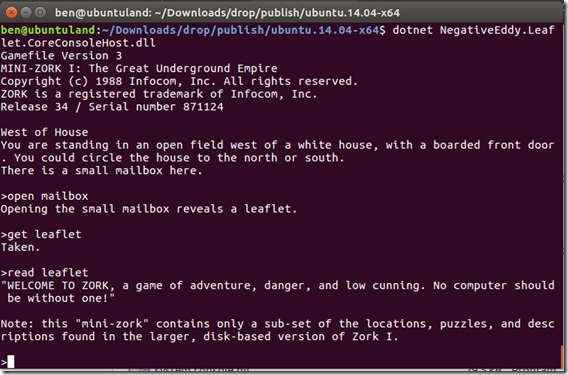

Blazor is not one of the standard channels for Bot Framework, so we need to create our own adapter to route messages in and out of the bot. Also, because a Blazor client-side app doesn’t refresh the page, the bot will get created only once and reused over and over. This is very similar to the Console Bot sample so we will use its adapter as a base.

The primary difference is that the Console adapter runs an infinite loop pulling input from the command line. We will provide a mechanism for the input to be fed in via a method call, and an event to notify the host when a bot response happens.

Add a class named BlazorAdapter to the EchoBot folder.

Create a public event on the BlazorAdapter for the client to subscribe to in order to receive new activities from the bot.

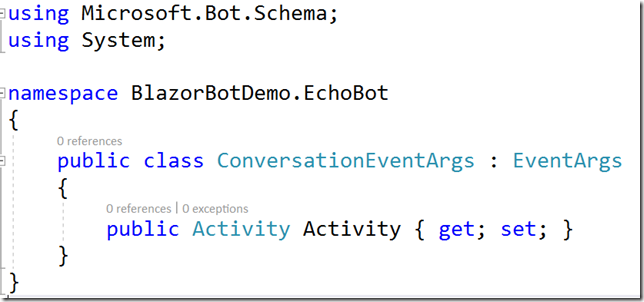

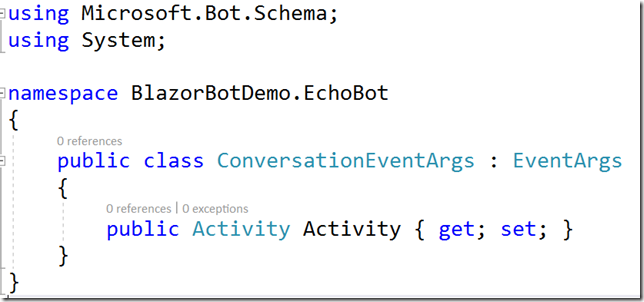

Create a class named ConversationEventArgs defined like this

This is the event that the bot adapter will raise whenever the bot communicates with the client. The bot activity will be passed in the event argument Activity.

Now modify the BlazorAdapter so that it derives from BotAdapter. The abstract class BotAdapter has 3 abstract methods you must override, but we will only need to implement one of them for this exercise.

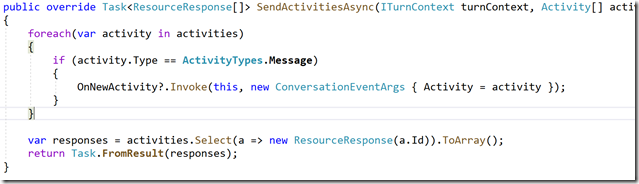

- SendActivitiesAsync – this is invoked whenever the bot sends activities back to the client. We will use it to raise an event to notify the client UI a new activity is available.

- UpdateActivityAsync – this is used to replace an existing activity in a conversation. We won’t need it.

- DeleteActivityAsync – this is used to delete an activity from a conversation. We won’t need it.

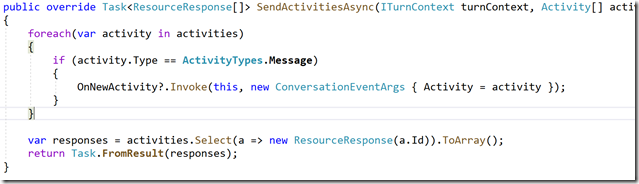

Override the SendActivitiesAsync method with the following

whenever the bot sends a response, this method will be called and the adapter will raise the event for each activity the bot sends.

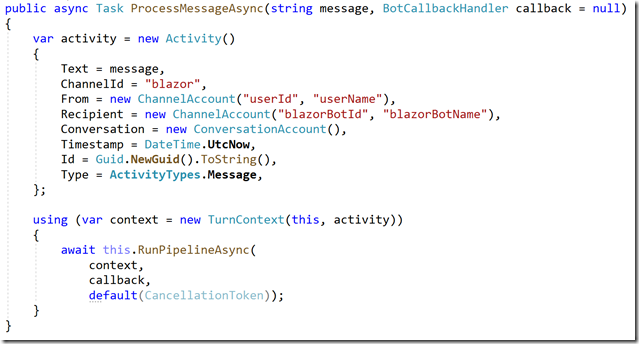

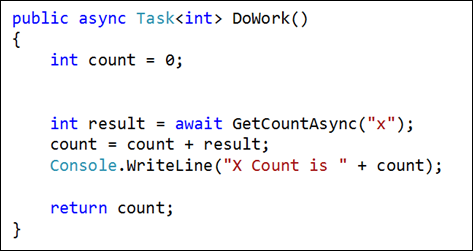

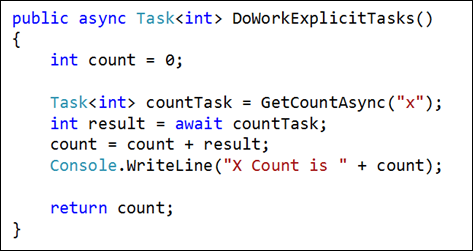

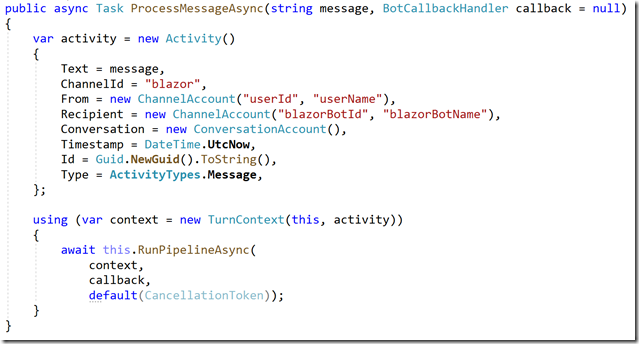

Now add a method to the adapter called ProcessMessageAsync which we will use to send messages to the bot. It looks like this

This method simple takes a string and turns it into a bot activity with default values and runs the bot pipeline.

Now that the adapter is built, we just need to put the bot and the adapter on the Blazor page and wire them up.

Update the Blazor app to talk to bot adapter

Now we need to build a UI that interacts with the bot adapter. We can’t use the standard webchat control because it assumes you want to talk to the bot connector service in the standard scenario. We need the UI to accept some text as input and respond to the bot adapter’s event for output from the bot.

Its theoretically possible to modify the webchat control to redirect its input/output directly to our adapter but that’s beyond the scope of this simple experiment.

Open the counter.razor page and place the following using statements immediately below the @page “/counter” directive

@using Console_EchoBot

@using BlazorBotDemo.EchoBot

@using Microsoft.Bot.Schema

@using Microsoft.Bot.Builder

Console_EchoBot is the namespace of the actual bot.

BlazorBotDemo.EchoBot is the namespace of you BlazorAdapter class

The other two namespaces are needed for the upcoming code.

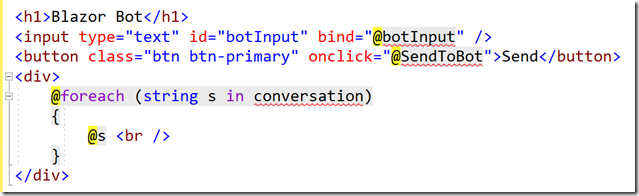

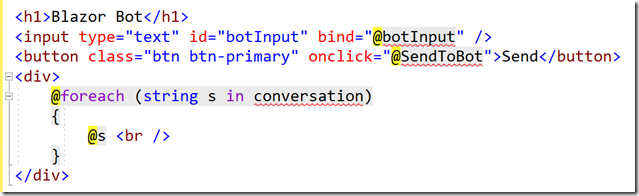

Replace the HTML section with the following

This has one input textbox to send messages to the bot and the output from the bot will be placed into the conversation variable which will be a collection of strings

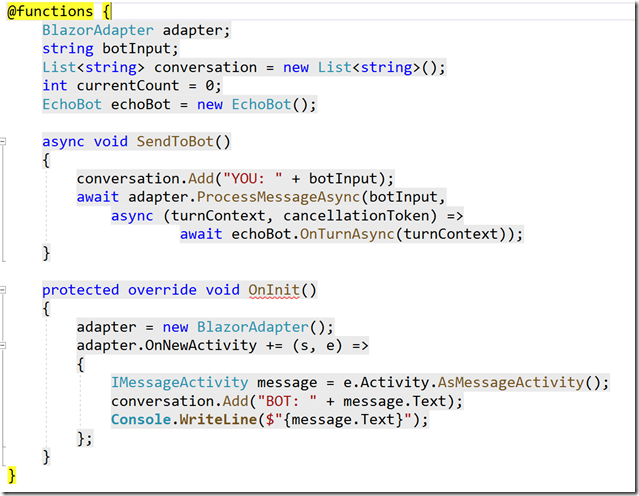

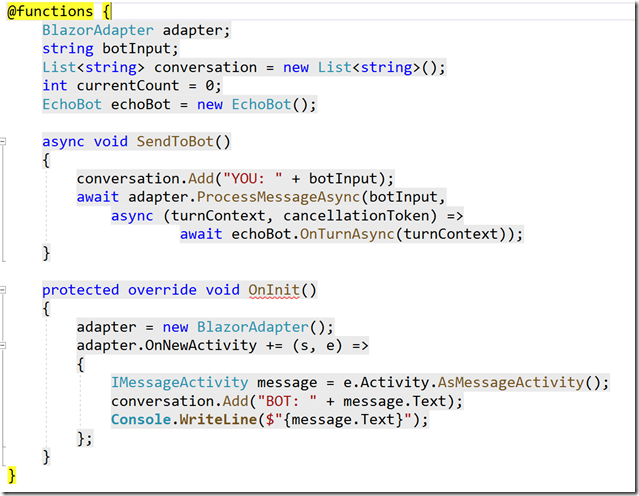

Replace the @functions section with the following

The OnInit function creates the adapter and wires up the event to recieve activities from the bot. When an activity is recieved, we extract the message and update the conversation collection with the new message.

The SendToBot function is invoked when the user clicks the button. At that time we take the string from the textbox and tell the adapter to process it, sending it to the bot.

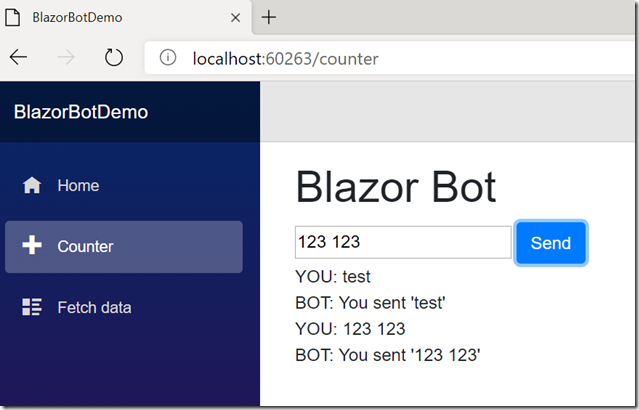

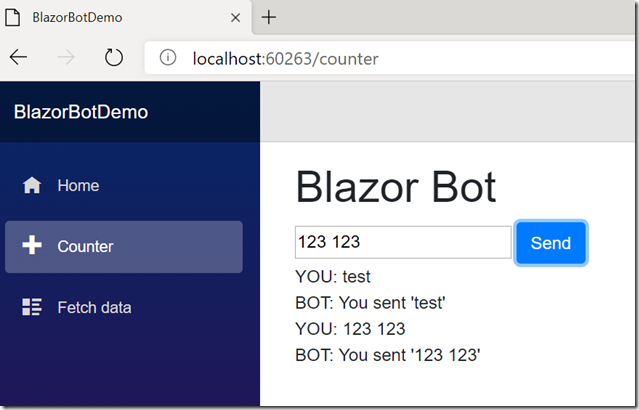

Run the app and on the Counter page, you should be able to interact with the bot on the Counter page!

A full running example of this is available at https://github.com/negativeeddy/BlazorBot

Happy coding!